The Technical Auditor: Using AI to Master Error Analysis in A-Level and GCSE Science

The Evaluation Hurdle: Why Lab Reports Stumble at the Finish Line

In the world of GCSE and A-Level Science, there is a significant difference between following a method and conducting an investigation. Most students can follow a set of instructions to perform a titration or measure the acceleration of a falling object. However, when it comes to the Evaluation section—often where the Assessment Objective 3 (AO3) marks are concentrated—many struggle to move beyond generic phrases like 'human error' or 'incorrect timing'.

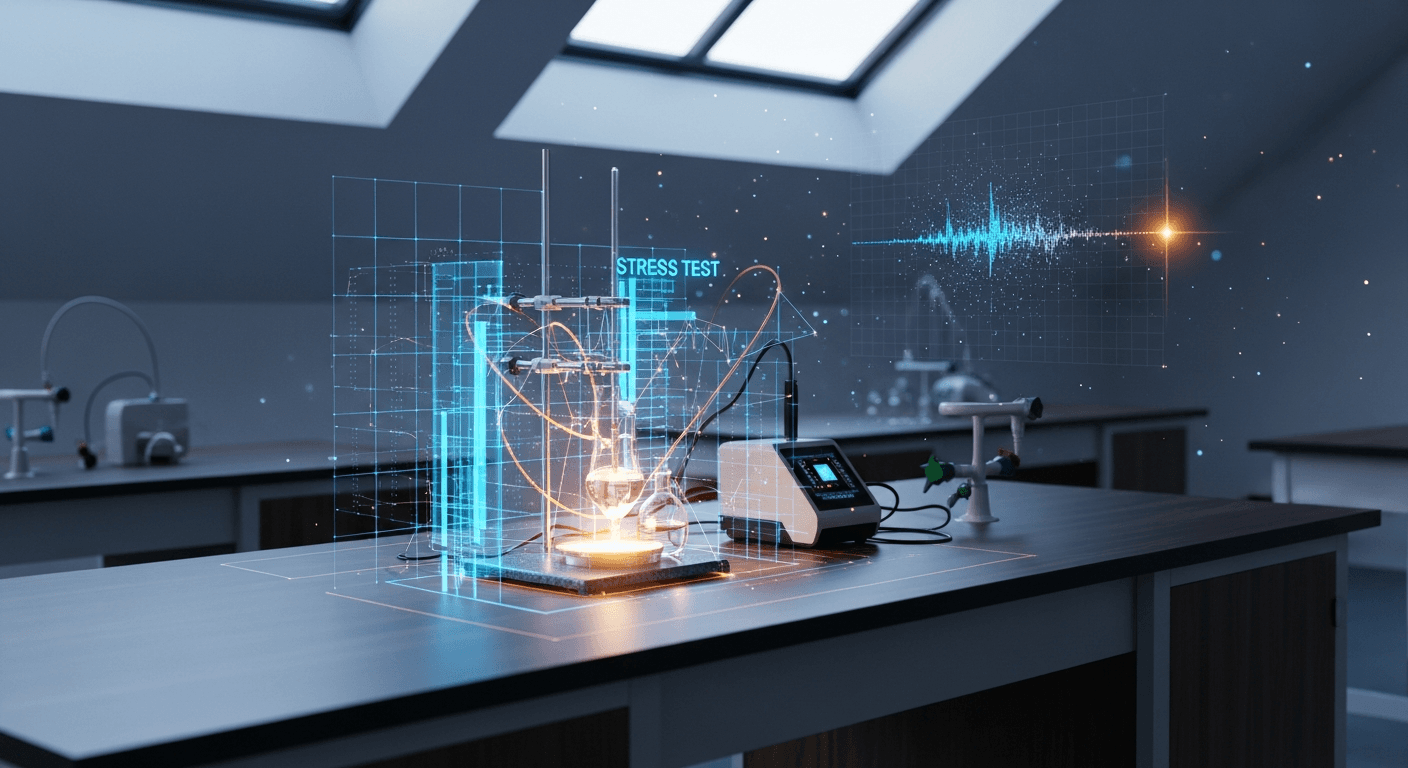

The Practical Endorsement at A-Level (CPAC) and the required practicals at GCSE demand a deeper level of critical thinking. Examiners are not looking for perfect results; they are looking for a student who understands why their results might be imperfect. This is where Artificial Intelligence is shifting from a simple writing assistant to a technical auditor. By using AI to stress-test your methodology and interpret data outliers, you can transform a mediocre lab report into an A* level piece of scientific analysis.

AI as Your Technical Auditor: Stress-Testing the Methodology

Instead of asking an AI to 'write my lab report,' savvy students are using it to audit their experimental design. A technical audit involves feeding the AI your proposed method and asking it to identify potential sources of systematic error. For instance, if you are conducting an experiment on the rate of reaction, you might ask an AI to simulate how a 1-degree Celsius fluctuation in room temperature could propagate through your final data points.

By using personalized study support, you can prompt an LLM to play the role of a sceptical lead researcher. Try inputting: 'I am measuring the Young Modulus of a wire. Here is my setup. What systematic errors might occur if the wire is not perfectly straight at the start, and how would this affect my gradient?' This allows you to 'defend' your results by showing you have considered the physics of the error, rather than just the outcome.

Decoding the Outliers: Why Your Result Isn't 'Wrong'

In school labs, equipment is rarely perfect. You might find a data point that sits miles away from your line of best fit. The instinct for many students is to ignore it or label it as an 'anomaly' without further thought. To hit the top marks, you need to explain the physical mechanism behind that outlier.

AI can help you model alternative outcomes. If your value for the Planck constant is 20% higher than the accepted value, you can use AI to cross-reference your specific equipment list with known physical limitations. Is it a parallax error from the voltmeter? Is it internal resistance in the battery that wasn't accounted for? When you use AI-powered practice platforms, you can input your raw data and ask the AI to suggest three distinct physical reasons for a specific deviation. This doesn't do the thinking for you; it provides a range of scientific hypotheses that you must then validate against your actual lab experience.

The Math of Mistakes: Mastering Percentage Uncertainty

A crucial part of A-Level Physics, Chemistry, and Biology is the calculation of uncertainty. Many students lose marks because they confuse reading uncertainty with measurement uncertainty. For example, when using a ruler, the uncertainty is often cited as:

\( \text{Percentage Uncertainty} = \frac{\text{Uncertainty}}{\text{Measured Value}} \times 100 \)

But if you are measuring a change in length, you have two readings, meaning your absolute uncertainty doubles.

AI is exceptionally good at checking your error propagation. You can provide the AI with your raw measurements and your calculated final uncertainty, then ask it to verify the logic. This is particularly useful for complex equations involving powers, such as the period of a pendulum where \( T = 2\text{π} \times \text{sqrt}(\frac{l}{g}) \). Understanding how the uncertainty in length (l) affects the uncertainty in the period (T) is a high-level skill that AI can help you visualise through step-by-step breakdowns.

Meeting the CPAC Standards: Proving Your Competence

For UK students, the Common Practical Assessment Criteria (CPAC) are the gatekeepers of your practical endorsement. You must demonstrate that you can 'use appropriate software and/or precision instruments' and 'evaluate results and draw conclusions'.

Using AI to interpret your data helps you meet CPAC 5 (Research and Referencing) and CPAC 4 (Making Decisions). When you cite a reason for an error that you discovered through an AI-led 'stress test', you are showing a level of independence and analytical rigour that is highly valued by universities. Teachers can also benefit from this by using tools to generate practice papers that focus specifically on the 'Evaluation' questions that frequently appear in Paper 3 of the A-Levels.

Practical Tips for Integrating AI into Your Lab Workflow

1. The Pre-Lab Audit: Before you even pick up a beaker, run your method through an AI. Ask: 'What are the three most likely sources of systematic error in this specific setup?'

2. The Outlier Investigation: If a result looks odd, don't delete it. Describe the setup to the AI and ask for the physical laws that might have caused that specific shift.

3. The Uncertainty Check: Use AI to verify your error propagation calculations, especially when dealing with squared or square-rooted variables in Physics.

4. The Comparison: Ask the AI for the 'accepted value' of a constant and how researchers in professional labs mitigate the errors you encountered in your school environment.

By treating AI as a technical auditor rather than a ghostwriter, you develop the genuine scientific literacy required for higher education. You aren't just getting the 'right' answer; you are learning to navigate the messy, imperfect reality of real-world data. For more help with mastering your science syllabus, explore our free study materials and start refining your evaluative skills today.

Related posts

- Apr 14, 2026

The Skeptical Algorithm: Bulletproofing Your High School Essays Through AI Debate Simulations

Stop using AI just to brainstorm. Learn how to transform LLMs into skeptical debate partners to stress-test your arguments and bulletproof your high school essays.

- Apr 4, 2026

The AI Organizer: Using Autonomous Agents to Manage Your High School Community Initiatives

Juggling exams and clubs? Use an AI organizer with autonomous agents to manage your high school initiatives and lead without stress. Ready to lead smarter?

- Mar 25, 2026

The Prompt Engineering Playbook: Transforming Generative AI Into Your Ultimate 24/7 Personalized Learning Assistant

Struggling with tough subjects? Master prompt engineering to turn AI into your 24/7 personal tutor. Study smarter and crush your exams. Learn how now!

- Mar 16, 2026

Using AI Voice Tools to Practice HKDSE English Oral Exams Without a Study Partner

No study partner? No problem! Master HKDSE English Paper 4 solo using AI voice tools. Practice group discussions anytime and boost your grade. Read more now!